|

The Iceberg ProjectProject Overview |

|

The Iceberg ProjectProject Overview |

Telecommunications networks are migrating towards Internet technology, with voice over IP maturing rapidly. We believe that the key open challenge for the converged network of the near future is its support for diverse access technologies (such as the Public Switched Telephone Network, digital cellular networks, pager networks, and IP-based networks) and innovative applications seamlessly integrating data and voice. The ICEBERG Project at U. C. Berkeley is seeking to meet this challenge with an open and composable service architecture founded on Internet-based standards for flow routing and agent deployment. This enables simple redirection of flows combined with pipelined transformations. These building blocks make possible new applications, like the Universal Inbox. Such an application intercepts flows in a range of formats, originating in different access networks (e.g., voice, fax, e-mail), and delivers them appropriately formatted for a particular end terminal (e.g., handset, fax machine, computer) based on the callee's preferences.

The design of the ICEBERG architecture is driven by the following types of services:

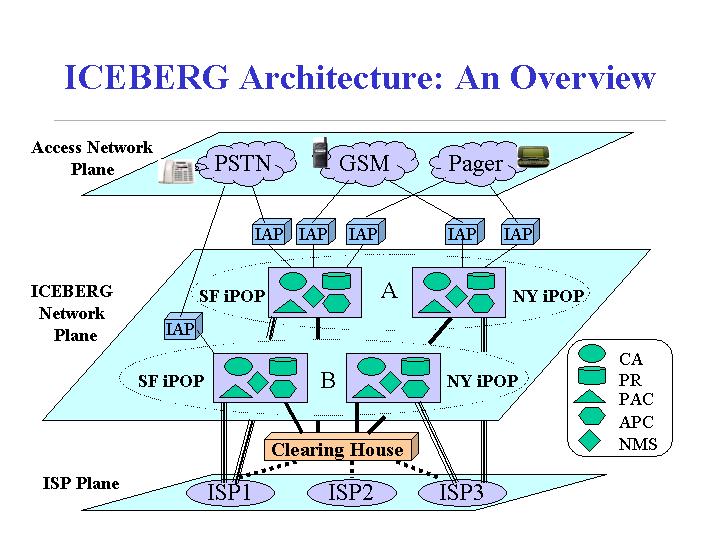

To enable any-to-any communications, integrated communication systems also need a component that inter-works with various networks for signaling translation and packetization. In our control architecture, this component is called an ICEBERG Access Point (IAP).

The MediaManager Service provides multi-modal access to the user's different mail repositories. It can do intelligent transformations such as summarizing a voice-mail. It uses a Transcoder Service for the data transformation.

Another important decision in our architecture design is to leverage cluster computing platforms for processing scalability and local-area robustness. Our control architecture requires processing scalability to a large call volume, continuous availability through fault masking, and cost-effectiveness. Clusters of commodity PCs interconnected by a high-speed System Area Network (SAN), acting as a single large-scale computer [NOW], are especially well-suited to meeting these challenges. Cluster computing platforms, such as [Ninja], provide an easy service development environment for service developers and mask them from cluster management problems of load-balancing, availability, and failure management. We plan to build the ICEBERG system on Ninja for these benefits. Our current release is built on Ninja iSpace computing platform which has not yet provided the above desirable cluster characteristics. Thus, this release does not have a full-fledged fault tolerance and load balancing mechanisms. In addition, this ICEBERG release heavily uses Remote Method Invocation for communication, which does not scale to large number of simulateneous invocations. For these reasons, this release serves as a prototype for people to read and run in a small scale. The following figure shows a 5,000 feet view of how ICEBERG system could be used in the real world. The ICEBERG network plane shows two ICEBERG Service Providers representing two different administrative domains: A and B. The services they provide are customizable integrated communication services on top of the Internet. Similar to the way that Internet Service Providers (ISP) provide Internet services through the use of Points of Presence (POPs) at different geographic locations, ICEBERG Service Providers consist of ICEBERG Points of Presence (iPOPs). Both A and B have iPOPs in San Francisco (SF) and New York (NY). Each iPOP contains Call Agents (CAs) which perform call setup and control, an Automatic Path Creation Service (APC) which establishes data flow between communication endpoints, a Preference Registry (PR) for users' call receiving preference management, a Personal Activity Coordinator (PAC) for user location or activity tracking, and a Name Mapping Service (NMS) which resolves user names in various networks. iPOPs must scale to a large population and a large call volume, be highly available, and be resilient to failures. This leads us to build the iPOP on the Ninja cluster computing platform. The ICEBERG network can be viewed as an overlay network of iPOPs on top of the Internet.

The ICEBERG Project Update presentation given at the recent Endeavour retreat (June 2000) describes the latest research directions.

Here is a presentation on ICEBERG goals. The presentation was given on 9/16/98 at the BMRC MIG seminar series.

Here is an HTML powerpoint presentation that outlines the goals and strategy of the project.